For an effect to fall in the sampling distribution 95% of the time, we use z scores of. With sample mean, population mean, population variance and sample size. For a Normal distribution, this standard score is known as the z score:

a mean between-group difference), we use the sampling distribution and a standard score to tell us where the effect falls in the sampling distribution. When calculating confidence intervals about an effect (eg. But the wide 95% CI shows the estimate of the effect of beer vs vodka on pain is very imprecise. Since the 95% CI crosses zero, there is no difference between beer and vodka. Here, on average, subjects who drank beer would experience as low as 0 points less pain to as high as 9 points less pain 95% of the time. If we had only tested 5 instead of 30 subjects, we might have found beer reduced pain response by 4 points (95% CI 0-8 points) compared to vodka. So confidence intervals indicate how precise an estimate is. If the study was repeated many times, on average, subjects who drank beer would experience as low as 2 points less pain to as high as 6 points less pain 95% of the time. For example, if we found that beer was better than vodka at reducing pain response by 4 points (95% CI 2-6 points) on a 10 point scale, this means subjects who drank beer experience 4 points less pain on average compared to those who drank vodka. The confidence interval about an effect indicates how the effect varies if the study is repeated many times. How do degrees of freedom influence t values when calculating confidence intervals?

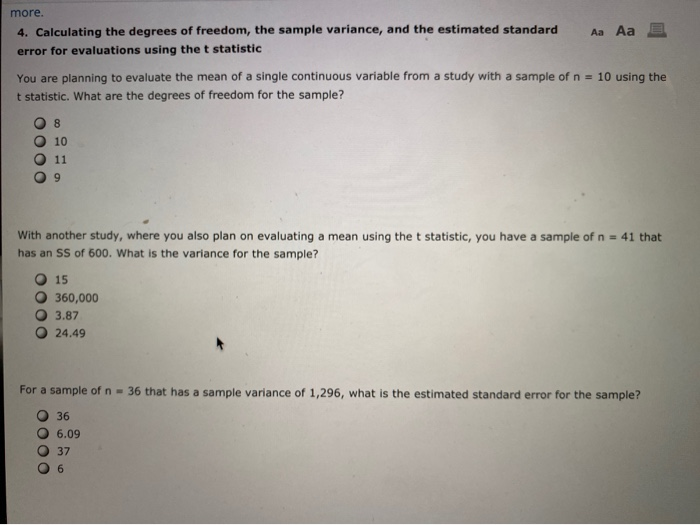

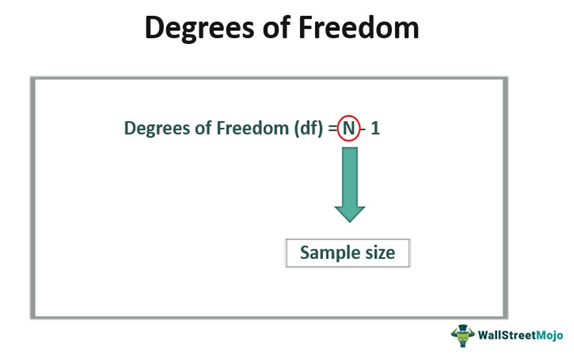

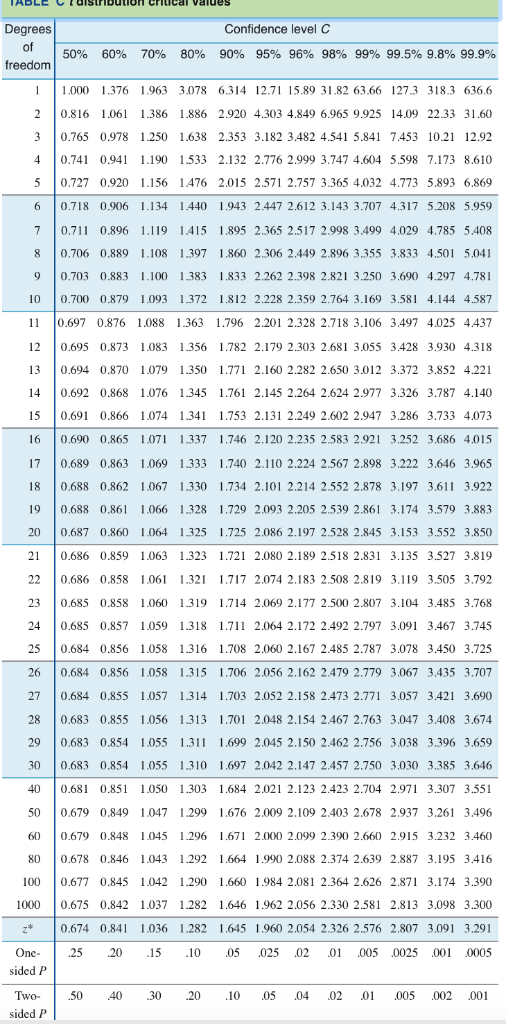

Where Δ is the 95% confidence interval, t is the value we read from the table, and S m is the estimated standard deviation.In a previous post we saw that t distributions with more degrees of freedom approximate the Normal distribution more closely, and degrees of freedom are increased by testing more subjects. In a simple one-variable case, we get slope and intercept from the regression, and df = N-2. Note that in the case of a linear regression analysis we subtract a degree of freedom for every parameter the analysis returns. However, we've already used the data to find the mean, x̄, so we have used up one degree of freedom, and "df" is now N-1. If we had not found anything from the data set, this would be equal to N, the number of observations. We also have to account for "degrees of freedom," listed as df in the table, but often given the Greek symbol ν. It is, of course, possible to present results with different probabilities of meeting the "real" value, but this corresponds to close to two standard deviations on either side of the "true" mean. In chemistry, we will normally want to report a 95% confidence interval, so select the column indicating P2 = 0.95. To calculate the confidence interval that this description provides, we will use the "two-sided" P2 to choose our probability. You will initially have calculated the mean of the data x̄ and the estimated standard deviation S m from the data set after applying the Q test for discordance, if necessary. The Student t Distribution Table P1 sided 433ff, or in Garland, Nibler & Schoemaker, pp. A full theoretical description is developed in Shafer & Zhang, Ch. Gosset (who went by the pseudonym "Student") requires finding a value of the t distribution for the number of observations that describes the desired probability for which we want to know the "how good?" question. The core problem with reporting a mean and an estimated standard deviation is that while it does describe the statistical behavior of our finite data set, it doesn't directly answer the question of "how good is the answer?" To do this we have to explicitly correct for the finite number of observations: a normal distribution actually presupposes an infinite data set, which we clearly will never have.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed